Why Generic AI Fails at Allergy Reasoning (And What Allergists Need Instead)

A 45-year-old patient presents with chronic rhinitis, positive SPT to dust mites, and a history of oral allergy syndrome to stone fruits. They’re asking about starting a new medication while continuing subcutaneous immunotherapy. How would a general AI assistant handle this consultation?

Recent research published in multiple medical journals reveals a concerning pattern: large language models (LLMs) consistently struggle with the nuanced clinical reasoning that defines allergy and immunology practice. The problem isn’t just accuracy—it’s the fundamental mismatch between how general AI processes information and how allergists think through complex immunologic cases.

The Reasoning Gap in General Medical AI

A comprehensive study examining LLM performance on physician reasoning tasks found that while these models excel at pattern recognition and information retrieval, they falter when faced with the multi-system, temporal reasoning required in allergy practice. The research highlighted specific failure modes that allergists encounter daily:

Cross-reactivity assessment: General AI struggles to connect seemingly unrelated allergen exposures. When a patient reports reactions to latex and avocado, the AI might document these as separate issues rather than recognizing the well-established latex-fruit syndrome pattern.

Temporal immunologic patterns: LLMs have difficulty tracking how allergic sensitization evolves over time. A patient’s progression from environmental allergies to food allergies to asthma—the classic atopic march—often gets fragmented into isolated episodes rather than understood as a connected immunologic trajectory.

Dosing complexity in immunotherapy: The intricate decision-making around immunotherapy protocols, dose adjustments based on reactions, and seasonal modifications requires clinical judgment that general AI consistently misses.

Why Allergy Reasoning Is Different

Allergy and immunology demands a unique form of clinical thinking that combines pattern recognition with mechanistic understanding. Consider these scenarios that challenge general AI:

Multi-allergen interpretation: When skin testing reveals 8 positive results, an experienced allergist immediately distinguishes between clinically relevant sensitizations and cross-reactive patterns. General AI tends to treat each positive result with equal weight, missing the clinical forest for the immunologic trees.

Risk stratification nuances: The difference between a patient who can safely undergo oral food challenge versus one who requires strict avoidance involves subtle clinical indicators—reaction timing, cofactor involvement, symptom progression—that require specialty training to interpret accurately.

Treatment sequencing: Deciding whether to start with environmental control, medications, or immunotherapy involves understanding each patient’s unique immunologic profile and lifestyle factors. General AI often defaults to algorithmic approaches that miss the personalized medicine aspect of allergy care.

The Path Toward Specialty-Trained AI

Emerging research suggests that the solution isn’t more powerful general AI, but rather AI systems trained specifically on allergy and immunology workflows. These specialty-focused tools demonstrate superior performance in several key areas:

Contextual allergen mapping: Specialty AI can recognize that a patient’s birch pollen sensitivity connects to their oral symptoms with apples—understanding that these aren’t separate allergies but manifestations of pollen-food allergy syndrome.

Protocol-aware documentation: Rather than generic medical notes, specialty AI understands allergy-specific documentation needs—tracking immunotherapy doses, recording wheal measurements with proper controls, and maintaining longitudinal allergen exposure histories.

Clinical decision support: Specialty-trained systems can surface relevant guidelines and considerations specific to allergy practice, such as when to consider component testing or how seasonal patterns might affect treatment timing.

What This Means for Allergy Practice

The research findings have practical implications for how allergists approach AI adoption. Generic medical AI tools, while impressive in their scope, often create more work for specialists who must correct misinterpretations and fill in clinical gaps.

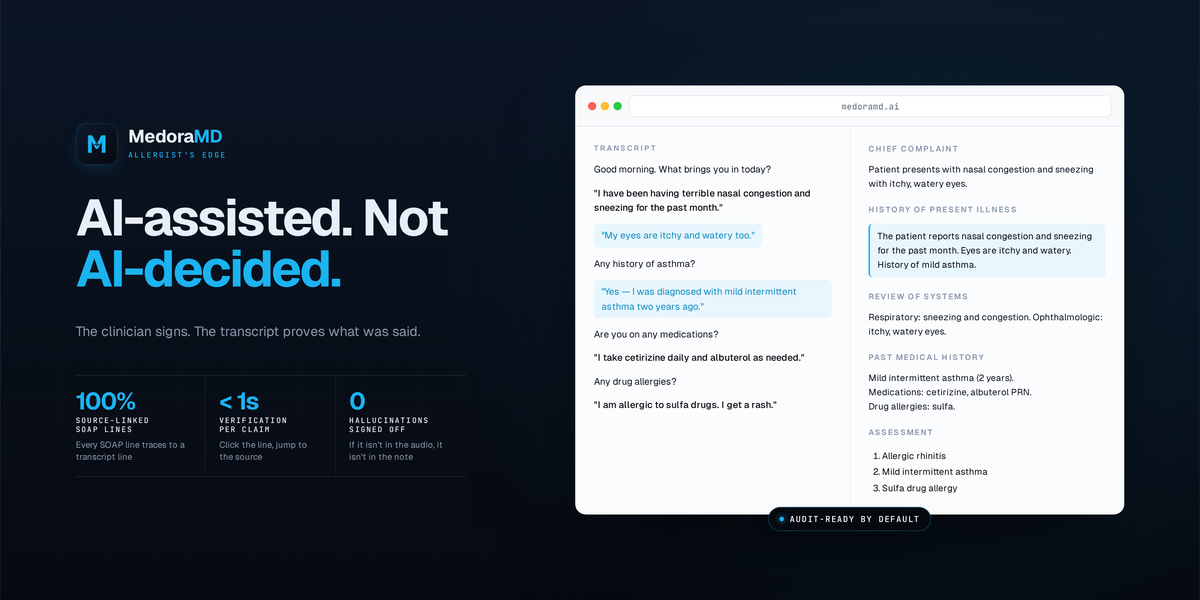

Instead, the evidence points toward AI systems designed from the ground up for allergy practice—tools that understand the specialty’s unique workflows, terminology, and clinical reasoning patterns. These systems don’t just transcribe what happens in an allergy encounter; they participate meaningfully in the clinical process.

For example, when documenting skin prick tests, specialty AI can automatically validate that appropriate positive and negative controls were included, flag unusual wheal patterns that might indicate technique issues, and structure the results in formats that support clinical decision-making rather than just record-keeping.

The Integration Challenge

Perhaps most importantly, the research highlights why allergists need AI tools that work together rather than in isolation. A scribe that doesn’t understand what the skin testing revealed creates documentation gaps. An allergen tracking system that can’t access the clinical notes misses crucial context.

The future of AI in allergy practice likely belongs to integrated systems where each component—documentation, testing, patient education, follow-up planning—operates on shared clinical context and specialty-specific understanding.

This research validates what many allergists have experienced firsthand: general AI tools, while technologically impressive, often fall short of the clinical depth required for specialty practice. The path forward involves AI systems built by allergists, with allergists, for the specific challenges of allergy and immunology care.

As the field continues to evolve, allergists have an opportunity to shape AI development in ways that genuinely support clinical excellence rather than simply automating existing inefficiencies. The question isn’t whether AI will transform allergy practice—it’s whether that transformation will be driven by tools that truly understand what allergists do.

Specialty-trained AI systems like Medora Skin Testing demonstrate this approach in practice. Rather than generic image analysis, the system understands allergy-specific protocols—automatically validating histamine and saline controls, measuring wheals according to established guidelines, and structuring results for seamless provider review. When Medora Scribe documents the encounter, it already knows what the skin testing revealed, creating unified clinical context rather than fragmented data points. This integration reflects the specialty-specific reasoning that general AI consistently misses.

What challenges have you encountered when general AI tools try to handle complex allergy cases in your practice?

See how Medora works in a real allergy clinic.

From ambient SOAP notes to AI-assisted skin prick test reading — see what Medora can do for your practice.