Building AI in a Live Allergy Clinic: 6-Month Reality Check

# Building AI in a Live Allergy Clinic: 6-Month Reality Check

Six months ago, we started deploying AI tools in a live allergy clinic. Not a pilot program or a controlled study — actual patient care, real workflows, allergists making split-second decisions while AI systems attempted to keep up.

The experience taught us more about clinical AI than any amount of theoretical planning could have. Here’s what we learned, including what didn’t work as expected.

The Documentation Reality Gap

Theory: AI scribes will eliminate documentation burden.

Reality: AI scribes reduce documentation burden when they understand allergy-specific terminology.

In our first month, the AI consistently missed nuanced allergy language. “Positive wheal at 15 minutes” became “patient reported improvement at 15 minutes.” “Cross-reactivity between birch and apple” was transcribed as “patient has birch and apple allergies.”

The breakthrough came when we realized generic AI scribes don’t understand the clinical context that makes allergy documentation precise. An allergist saying “4mm wheal, 2mm flare” isn’t just describing measurements — they’re documenting clinical significance that determines treatment decisions.

What worked: Training AI specifically on allergy terminology and workflow patterns. Our Medora Scribe now recognizes skin test measurements, immunotherapy protocols, and cross-reactivity discussions because it was built with allergists, not adapted from general medicine.

What we’re still working on: Complex cases where multiple allergens interact. The AI handles straightforward visits well but still requires physician review for patients with multiple food allergies and environmental sensitivities.

The Measurement Consistency Challenge

Skin prick testing revealed an unexpected problem: measurement variability between staff members. We discovered that manual wheal measurements could vary by 1-2mm between different nurses — enough to change clinical interpretation.

This wasn’t a training issue. These were experienced allergy nurses who knew proper measurement technique. The variability came from human factors: lighting conditions, viewing angle, and the inherent subjectivity of measuring irregular wheals.

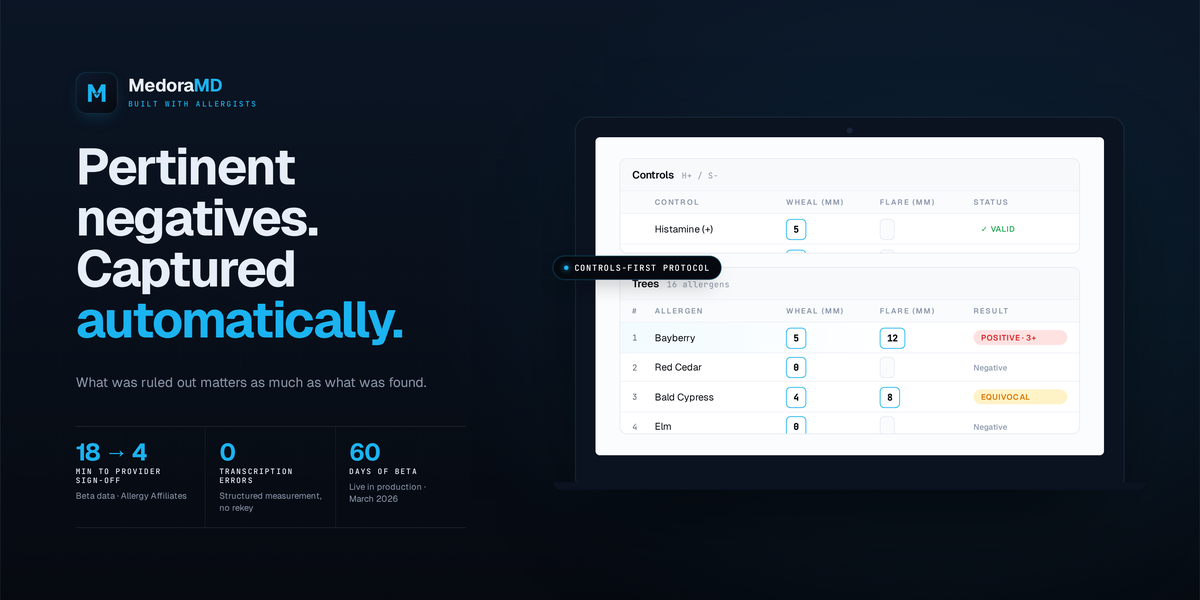

What worked: Photo-based measurement with AI validation. Our Medora Skin Testing module now captures standardized photos and provides consistent measurements across all staff. In 60 days of Beta testing at Allergy Affiliates, we measured 312 skin prick tests with consistent results regardless of which nurse administered the test.

The unexpected benefit: Provider sign-off time dropped from 18 minutes to 4 minutes per patient. When allergists receive structured, photo-documented results instead of handwritten notes, they can make treatment decisions faster.

The Context Fragmentation Problem

Here’s what no one talks about in AI healthcare discussions: tool switching destroys clinical thinking.

We watched allergists use separate systems for documentation, skin test results, and patient instructions. Each tool required re-entering patient context. The cognitive load wasn’t from complexity — it was from constantly rebuilding the clinical picture.

One allergist described it perfectly: “I’m not thinking about the patient anymore. I’m thinking about which system has which piece of information.”

What worked: Unified patient context across all AI tools. When our Scribe captures a patient’s birch pollen sensitivity, our AllergenIQ module immediately surfaces apple and hazelnut cross-reactivity patterns. The skin testing results feed directly into immunotherapy recommendations. Everything operates on the same patient record.

The measurable impact: Allergists stopped asking nurses to repeat information they’d already documented. The handoff conversation shifted from “What were the results?” to “What’s your clinical assessment?”

The Spanish Language Barrier

We underestimated how much language barriers affect allergy care. Detailed symptom histories require nuanced communication. Phone interpreters create delays. Family member translation introduces accuracy concerns.

Our solution started simple: QR codes that patients scan for real-time Spanish↔English interpretation. No app downloads, no phone calls, no scheduling delays.

What worked: Immediate access when allergists need clarification. Medora Interpreter handles allergy-specific terminology that generic translation services miss. “Urticaria” translates correctly as “urticaria” or “ronchas,” not “skin condition.”

What surprised us: Patients preferred the privacy. Sensitive topics like food restrictions or medication concerns were easier to discuss without family members translating.

The Evidence Trail Challenge

Clinical notes should connect back to actual conversations. Too often, AI-generated documentation creates statements that sound clinical but can’t be traced to what the patient actually said.

We built Evidence Mapping to solve this. Every clinical statement in the SOAP note links back to the specific moment in the conversation transcript. If an allergist writes “Patient reports throat tightness with shellfish,” they can click through to hear exactly what the patient said.

What worked: Increased confidence in AI-assisted documentation. Allergists could verify accuracy without re-listening to entire encounters.

The compliance benefit: Clear audit trails for any clinical statement. Important for allergy practices where documentation supports immunotherapy decisions and emergency action plans.

What We Got Wrong

Not everything worked as planned. Our initial AI models were too aggressive in clinical interpretation. Early versions would suggest diagnoses rather than document symptoms. Allergists rightfully rejected this approach.

We learned that clinical AI should enhance physician decision-making, not attempt to replace it. The AI’s job is accurate documentation and pattern recognition, not clinical judgment.

The Real ROI: Mental Load Reduction

The biggest impact wasn’t time savings — it was cognitive load reduction. Allergists reported feeling more present during patient encounters when they weren’t mentally tracking documentation requirements.

One physician noted: “I’m listening to understand the patient’s experience, not listening to remember what to write down later.”

Looking Forward

Six months of live deployment taught us that effective clinical AI requires deep specialty knowledge and unified patient context. Generic tools adapted for allergy care will always feel like adaptations.

The future of allergy practice technology isn’t about adding more AI tools — it’s about creating integrated systems that understand how allergists actually work.

—

How Medora Supports This Vision

Medora was built specifically for this reality. Our Scribe captures allergy-specific terminology in real-time. Our Skin Testing module provides photo-based measurement consistency. AllergenIQ tracks longitudinal allergen patterns across visits. Every module operates on unified patient context, so allergists spend more time thinking about clinical care and less time managing disconnected tools.

Learn more about how Medora supports allergy practice workflows at medoramd.ai/demo.

What challenges have you seen when implementing new technology in your allergy practice?

See how Medora works in a real allergy clinic.

From ambient SOAP notes to AI-assisted skin prick test reading — see what Medora can do for your practice.