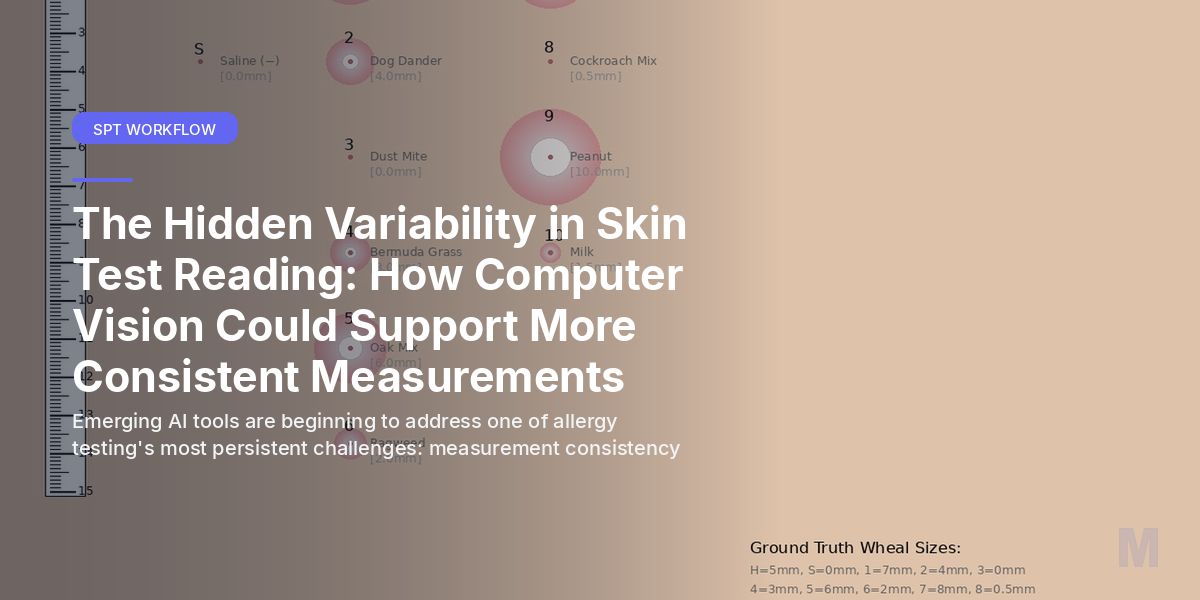

The Hidden Variability in Skin Test Reading: How Computer Vision Could Support More Consistent Measurements

Dr. Sarah Chen paused over the skin test results, measuring the wheal with her ruler for the third time. The reaction looked borderline—somewhere between 2.8 and 3.2 mm. Her colleague, reviewing the same patient an hour later, measured it at 3.5 mm. Both experienced allergists, both careful practitioners, yet their measurements differed enough to potentially change the clinical interpretation.

This scenario plays out in allergy clinics daily, highlighting one of our field’s most persistent challenges: the inherent subjectivity in skin prick test interpretation.

The Measurement Challenge We All Face

Skin prick tests remain the gold standard for IgE-mediated allergy diagnosis, but their interpretation relies heavily on visual assessment and manual measurement. Even with standardized protocols, several factors introduce variability:

Human Measurement Variability: Studies suggest that inter-observer variation in wheal measurement can range from 10-20%, even among experienced allergists. Factors like viewing angle, lighting conditions, and individual interpretation of wheal borders all contribute to this variation.

Timing Considerations: The optimal reading time is 15-20 minutes post-application, but busy clinic schedules sometimes compress or extend this window. Wheals continue evolving beyond the standard reading time, potentially affecting measurements.

Documentation Inconsistencies: Manual recording of results introduces another layer of potential error, from transcription mistakes to illegible handwriting affecting follow-up care continuity.

Where Computer Vision Shows Promise

Emerging research suggests that AI-powered image analysis could address some of these consistency challenges. Computer vision technology, already proven in dermatology and radiology applications, is beginning to show potential in allergy testing interpretation.

Objective Boundary Detection: Advanced algorithms can analyze skin test photos to identify wheal boundaries more consistently than human observation alone. Early studies indicate that computer vision can detect subtle color and texture changes that define reaction borders, potentially reducing inter-observer variability.

Standardized Measurement Protocols: AI systems can apply consistent measurement criteria across all tests, eliminating variations in technique between different staff members or time pressures that might affect manual measurements.

Enhanced Documentation: Photo-based systems create permanent visual records of test results, supporting better patient care continuity and enabling retrospective analysis when needed.

Clinical Applications in Development

Several applications of AI-assisted skin test reading are showing promise in early research:

Real-Time Quality Assurance: Computer vision could flag potential measurement discrepancies or suggest remeasurement when results fall near diagnostic thresholds, supporting clinical decision-making rather than replacing clinical judgment.

Training Support: AI systems could assist in training new staff by providing consistent measurement references and highlighting common interpretation challenges.

Research Applications: Standardized digital measurements could improve research data quality in clinical trials and epidemiological studies, where measurement consistency is crucial for valid results.

Current Limitations and Realistic Expectations

While the potential is encouraging, it’s important to acknowledge current limitations. Preliminary research indicates several areas where AI-assisted measurement still faces challenges:

Complex Reaction Patterns: Irregular wheals, confluent reactions, or cases with significant erythema without clear wheal formation remain challenging for current computer vision algorithms.

Environmental Variables: Lighting conditions, camera angles, and skin tone variations can still affect AI measurement accuracy, requiring careful standardization of photo capture protocols.

Clinical Context Integration: AI systems excel at measurement but cannot incorporate the full clinical context that experienced allergists consider when interpreting results.

Implementation Considerations for Practices

For practices considering AI-assisted skin test reading, several factors warrant consideration:

Workflow Integration: Successful implementation requires seamless integration with existing testing protocols without significantly extending appointment times or creating additional administrative burden.

Staff Training: While AI systems aim to reduce variability, they still require proper training for photo capture techniques and result interpretation to achieve their potential benefits.

Quality Assurance: AI-assisted measurements should complement, not replace, clinical oversight. Experienced allergists should review AI-generated measurements, particularly for borderline cases or complex reaction patterns.

Looking Ahead: Supporting Clinical Excellence

The goal of AI-assisted skin test reading isn’t to replace clinical expertise but to support it. By potentially reducing measurement variability and improving documentation consistency, these tools could free allergists to focus more on clinical interpretation and patient care.

Early testing with allergist partners suggests that computer vision tools like those being developed by Medora could help standardize the measurement process while maintaining the clinical judgment that remains essential for accurate allergy diagnosis. We’re still learning about optimal implementation approaches, but the potential to support more consistent, well-documented allergy testing represents a meaningful step forward for our field.

See how Medora works in a real allergy clinic.

From ambient SOAP notes to AI-assisted skin prick test reading — see what Medora can do for your practice.